Introduction

We previously used importance sampling in the case where we did not have a sampler available for the distribution from which we wished to sample. There is an even more compelling case for using importance sampling.

Suppose we wish to estimate the probability of a rare event. For example, suppose we wish to estimate where

. In this case, we can look up the answer

. But suppose we couldn’t look up the answer. One strategy that might occur to us is to sample and then estimate the probability by counting the number of times out of the total that the sample was bigger than 5. The flaw in this is obvious but let’s try it anyway.

> module Girsanov where

> import qualified Data.Vector as V

> import Data.Random.Source.PureMT

> import Data.Random

> import Control.Monad.State

> import Data.Histogram.Fill

> import Data.Histogram.Generic ( Histogram )

> import Data.Number.Erf

> import Data.List ( transpose )

> samples :: (Foldable f, MonadRandom m) =>

> (Int -> RVar Double -> RVar (f Double)) ->

> Int ->

> m (f Double)

> samples repM n = sample $ repM n $ stdNormal

> biggerThan5 :: Int

> biggerThan5 = length (evalState xs (pureMT 42))

> where

> xs :: MonadRandom m => m [Double]

> xs = liftM (filter (>= 5.0)) $ samples replicateM 100000

As we might have expected, even if we draw 100,000 samples, we estimate this probability quite poorly.

ghci> biggerThan5

0

Using importance sampling we can do a lot better.

Let be a normally distributed random variable with zero mean and unit variance under the Lebesgue measure

. As usual we can then define a new probability measure, the law of

, by

Thus

where we have defined

Thus we can estimate either by sampling from a normal distribution with mean 0 and counting the number of samples that are above 5 or we can sample from a normal distribution with mean 5 and calculating the appropriately weighted mean

Let’s try this out.

> biggerThan5' :: Double

> biggerThan5' = sum (evalState xs (pureMT 42)) / (fromIntegral n)

> where

> xs :: MonadRandom m => m [Double]

> xs = liftM (map g) $

> liftM (filter (>= 5.0)) $

> liftM (map (+5)) $

> samples replicateM n

> g x = exp $ (5^2 / 2) - 5 * x

> n = 100000

And now we get quite a good estimate.

ghci> biggerThan5'

2.85776225450217e-7

Random Paths

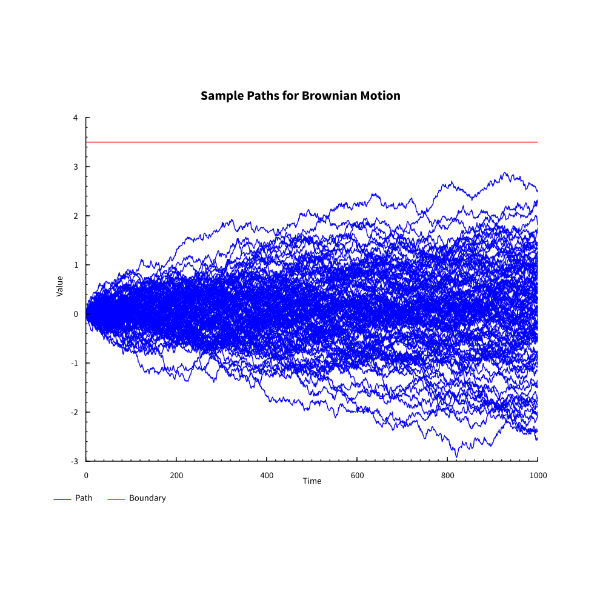

The probability of another rare event we might wish to estimate is that of Brownian Motion crossing a boundary. For example, what is the probability of Browian Motion crossing the line ? Let’s try sampling 100 paths (we restrict the number so the chart is still readable).

> epsilons :: (Foldable f, MonadRandom m) =>

> (Int -> RVar Double -> RVar (f Double)) ->

> Double ->

> Int ->

> m (f Double)

> epsilons repM deltaT n = sample $ repM n $ rvar (Normal 0.0 (sqrt deltaT))

> bM0to1 :: Foldable f =>

> ((Double -> Double -> Double) -> Double -> f Double -> f Double)

> -> (Int -> RVar Double -> RVar (f Double))

> -> Int

> -> Int

> -> f Double

> bM0to1 scan repM seed n =

> scan (+) 0.0 $

> evalState (epsilons repM (recip $ fromIntegral n) n) (pureMT (fromIntegral seed))

We can see by eye in the chart below that again we do quite poorly.

We know that where

.

> p :: Double -> Double -> Double

> p a t = 2 * (1 - normcdf (a / sqrt t))

ghci> p 1.0 1.0

0.31731050786291415

ghci> p 2.0 1.0

4.550026389635842e-2

ghci> p 3.0 1.0

2.699796063260207e-3

But what if we didn’t know this formula? Define

where is the measure which makes

Brownian Motion.

We can estimate the expectation of

where is 1 if Brownian Motion hits the barrier and 0 otherwise and M is the total number of simulations. We know from visual inspection that this gives poor results but let us try some calculations anyway.

> n = 500

> m = 10000

> supAbove :: Double -> Double

> supAbove a = fromIntegral count / fromIntegral n

> where

> count = length $

> filter (>= a) $

> map (\seed -> maximum $ bM0to1 scanl replicateM seed m) [0..n - 1]

> bM0to1WithDrift seed mu n =

> zipWith (\m x -> x + mu * m * deltaT) [0..] $

> bM0to1 scanl replicateM seed n

> where

> deltaT = recip $ fromIntegral n

ghci> supAbove 1.0

0.326

ghci> supAbove 2.0

7.0e-2

ghci> supAbove 3.0

0.0

As expected for a rare event we get an estimate of 0.

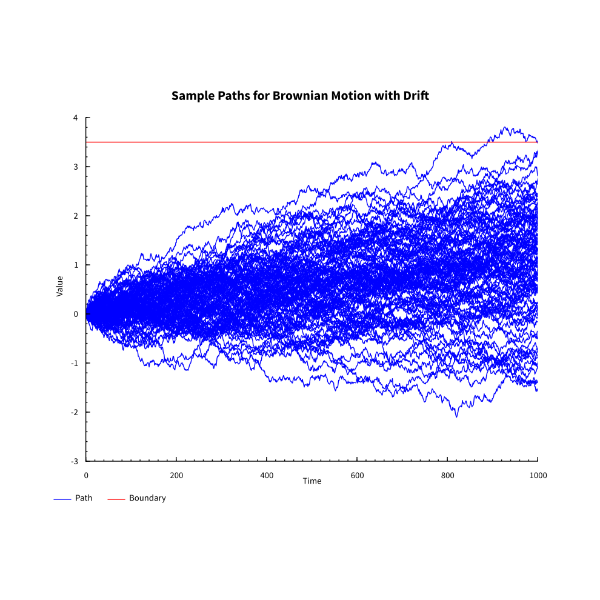

Fortunately we can use importance sampling for paths. If we take where

is a constant in Girsanov’s Theorem below then we know that

is

-Brownian Motion.

We observe that

So we can also estimate the expectation of under

as

where is now 1 if Brownian Motion with the specified drift hits the barrier and 0 otherwise, and

is Brownian Motion sampled at

.

We can see from the chart below that this is going to be better at hitting the required barrier.

Let’s do some calculations.

> supAbove' a = (sum $ zipWith (*) ns ws) / fromIntegral n

> where

> deltaT = recip $ fromIntegral m

>

> uss = map (\seed -> bM0to1 scanl replicateM seed m) [0..n - 1]

> ys = map last uss

> ws = map (\x -> exp (-a * x - 0.5 * a^2)) ys

>

> vss = map (zipWith (\m x -> x + a * m * deltaT) [0..]) uss

> sups = map maximum vss

> ns = map fromIntegral $ map fromEnum $ map (>=a) sups

ghci> supAbove' 1.0

0.31592655955519156

ghci> supAbove' 2.0

4.999395029856741e-2

ghci> supAbove' 3.0

2.3859203473651654e-3

The reader is invited to try the above estimates with 1,000 samples per path to see that even with this respectable number, the calculation goes awry.

In General

If we have a probability space and a non-negative random variable

with

then we can define a new probability measure

on the same

-algebra by

For any two probability measures when such a exists, it is called the Radon-Nikodym derivative of

with respect to

and denoted

Given that we managed to shift a Normal Distribution with non-zero mean in one measure to a Normal Distribution with another mean in another measure by producing the Radon-Nikodym derivative, might it be possible to shift, Brownian Motion with a drift under a one probability measure to be pure Brownian Motion under another probability measure by producing the Radon-Nikodym derivative? The answer is yes as Girsanov’s theorem below shows.

Girsanov’s Theorem

Let be Brownian Motion on a probability space

and let

be a filtration for this Brownian Motion and let

be an adapted process such that the Novikov Sufficiency Condition holds

then there exists a probability measure such that

-

is equivalent to

, that is,

.

-

.

-

is Brownian Motion on the probabiity space

also with the filtration

.

In order to prove Girsanov’s Theorem, we need a condition which allows to infer that is a strict martingale. One such useful condition to which we have already alluded is the Novikov Sufficiency Condition.

Proof

Define by

Then, temporarily overloading the notation and writing for expectation under

, and applying the Novikov Sufficiency Condition to

, we have

From whence we see that

And since this characterizes Brownian Motion, we are done.

The Novikov Sufficiency Condition

The Novikov Sufficiency Condition Statement

Let and further let it satisfy the Novikov condition

then the process defined by

is a strict martingale.

Before we prove this, we need two lemmas.

Lemma 1

Let for

be a non-negative local martingale then

is a super-martingale and if further

then

is a strict martingale.

Proof

Let be a localizing sequence for

then for

and using Fatou’s lemma and the fact that the stopped process is a strict martingale

Thus is a super-martingale and therefore

By assumption we have thus

is a strict martingale.

Lemma 2

Let be a non-negative local martingale. If

is a localizing sequence such that

for some

then

is a strict martingale.

Proof

By the super-martingale property and thus by dominated convergence we have that

We also have that

By Chebyshev’s inequality (see note (2) below), we have

Taking limits first over and then over

we see that

For and

we have

. Thus

Again taking limits over and then over

we have

These two conclusions imply

We can therefore conclude (since is a martingale)

Thus by the preceeding lemma is a strict as well as a local martingale.

The Novikov Sufficiency Condition Proof

Step 1

First we note that is a local martingale for

. Let us show that it is a strict martingale. We can do this if for any localizing sequence

we can show

using the preceeding lemma where .

We note that

Now apply Hölder’s inequality with conjugates and

where

is chosen to ensure that the conjugates are both strictly greater than 1 (otherwise we cannot apply the inequality).

Now let us choose

then

In order to apply Hölder’s inequality we need to check that and that

but this amounts to checking that

and that

. We also need to check that

but this amounts to checking that

for

and this is easily checked to be true.

Re-writing the above inequality with this value of we have

By the first lemma, since is a non-negative local martingale, it is also a supermartingale. Furthermore

. Thus

and therefore

Step 2

Recall we have

Taking logs gives

or in diferential form

We can also apply Itô’s rule to

where denotes the quadratic variation of a stochastic process.

Comparing terms gives the stochastic differential equation

In integral form this can also be written as

Thus is a local martingale (it is defined by a stochastic differential equation) and by the first lemma it is a supermaringale. Hence

.

Next we note that

to which we can apply Hölder’s inequality with conjugates to obtain

Applying the supermartingale inequality then gives

Step 3

Now we can apply the result in Step 2 to the result in Step 1.

We can replace by

for any stopping time

. Thus for a localizing sequence we have

From which we can conclude

Now we can apply the second lemma to conclude that is a strict martingale.

Final Step

We have already calculated that

Now apply Hölder’s inequality with conjugates and

.

And then we can apply Jensen’s inequality to the last term on the right hand side with the convex function .

Using the inequality we established in Step 2 and the Novikov condition then gives

If we now let we see that we must have

. We already now that

by the first lemma and so we have finally proved that

is a martingale.

Notes

-

We have already used importance sampling and also touched on changes of measure.

-

Chebyshev’s inequality is usually stated for the second moment but the proof is easily adapted:

- We follow Handel (2007); a similar approach is given in Steele (2001).

Bibliography

Handel, Ramon von. 2007. “Stochastic Calculus, Filtering, and Stochastic Control (Lecture Notes).”

Steele, J.M. 2001. Stochastic Calculus and Financial Applications. Applications of Mathematics. Springer New York. https://books.google.co.uk/books?id=fsgkBAAAQBAJ.